Sora is ready to create complicated scenes with multiple people, distinct types of movement, and correct information of the subject and background. The model understands not just just what the person has requested for within the prompt, but also how These matters exist within the Bodily environment.

We’ll be getting several crucial protection actions forward of constructing Sora accessible in OpenAI’s products. We have been dealing with red teamers — domain gurus in areas like misinformation, hateful material, and bias — who'll be adversarially tests the model.

Every one of those is usually a notable feat of engineering. For any start out, schooling a model with greater than one hundred billion parameters is a fancy plumbing trouble: hundreds of personal GPUs—the hardware of option for teaching deep neural networks—needs to be related and synchronized, and also the instruction knowledge break up into chunks and dispersed involving them in the ideal get at the right time. Large language models have grown to be prestige tasks that showcase a company’s specialized prowess. However handful of of such new models go the study forward further than repeating the demonstration that scaling up will get very good effects.

This write-up describes four initiatives that share a common topic of enhancing or using generative models, a department of unsupervised Understanding procedures in device learning.

Consumer-Produced Material: Pay attention to your buyers who worth critiques, influencer insights, and social websites traits which can all tell merchandise and repair innovation.

. Jonathan Ho is becoming a member of us at OpenAI for a summer months intern. He did most of this function at Stanford but we incorporate it listed here for a connected and remarkably creative application of GANs to RL. The typical reinforcement Understanding location typically involves 1 to style and design a reward functionality that describes the specified actions of your agent.

Prompt: Photorealistic closeup online video of two pirate ships battling one another because they sail inside a cup of coffee.

The creature stops to interact playfully with a gaggle of little, fairy-like beings dancing all-around a mushroom ring. The creature appears to be up in awe at a big, glowing tree that seems to be the heart with the forest.

The new Apollo510 MCU is at the same time quite possibly the most Strength-effective and highest-general performance products we've at any time designed."

Next, the model is 'experienced' on that information. At last, the skilled model is compressed and deployed into the endpoint units where they're going to be put to work. Every one of these phases necessitates considerable development and engineering.

—there are many attainable options to mapping the device Gaussian to images plus the 1 we end up having could be intricate and really entangled. The InfoGAN imposes further composition on this Area by incorporating new objectives that require maximizing the mutual information amongst small subsets in the representation variables plus the observation.

Exactly what does it indicate for the model for being huge? The size Apollo 4 plus of the model—a properly trained neural network—is calculated by the quantity of parameters it has. They are the values in the network that get tweaked repeatedly yet again for the duration of instruction and are then utilized to make the model’s predictions.

Our website makes use of cookies Our website use cookies. By continuing navigating, we think your permission to deploy cookies as in-depth in our Privateness Policy.

Furthermore, the performance metrics offer insights into the model's accuracy, precision, remember, and F1 rating. For a variety of the models, we offer experimental and ablation scientific tests to showcase the affect of varied design and style selections. Check out the Model Zoo to learn more concerning the available models as well as their corresponding general performance metrics. Also examine the Experiments to learn more concerning the ablation research and experimental success.

Accelerating the Development of Optimized AI Features with Ambiq’s neuralSPOT

Ambiq’s neuralSPOT® is an open-source AI developer-focused SDK designed for our latest Apollo4 Plus system-on-chip (SoC) family. neuralSPOT provides an on-ramp to the rapid development of AI features for our customers’ AI applications and products. Included with neuralSPOT are Ambiq-optimized libraries, tools, and examples to help jumpstart AI-focused applications.

UNDERSTANDING NEURALSPOT VIA THE BASIC TENSORFLOW EXAMPLE

Often, the best way to ramp up on a new software library is through a comprehensive example – this is why neuralSPOt includes basic_tf_stub, an illustrative example that leverages many of neuralSPOT’s features.

In this article, we walk through the example block-by-block, using it as a guide to building AI features using neuralSPOT.

Ambiq's Vice President of Artificial Intelligence, Carlos Morales, went on CNBC Street Signs Asia to discuss the power consumption of AI and trends in endpoint devices.

Since 2010, Ambiq has been a leader in ultra-low power semiconductors that enable endpoint devices with more data-driven and AI-capable features while dropping the energy requirements up to 10X lower. They do this with the patented Subthreshold Power Optimized Technology (SPOT ®) platform.

Computer inferencing is complex, and for endpoint AI to become practical, these devices have to drop from megawatts of power to microwatts. This is where Ambiq has the power to change industries such as healthcare, agriculture, and Industrial IoT.

Ambiq Designs Low-Power for Next Gen Endpoint Devices

Ambiq’s VP of Architecture and Product Planning, Dan Cermak, joins the ipXchange Ambiq micro singapore team at CES to discuss how manufacturers can improve their products with ultra-low power. As technology becomes more sophisticated, energy consumption continues to grow. Here Dan outlines how Ambiq stays ahead of the curve by planning for energy requirements 5 years in advance.

Ambiq’s VP of Architecture and Product Planning at Embedded World 2024

Ambiq specializes in ultra-low-power SoC's designed to make intelligent battery-powered endpoint solutions a reality. These days, just about every endpoint device incorporates AI features, including anomaly detection, speech-driven user interfaces, audio event detection and classification, and health monitoring.

Ambiq's ultra low power, high-performance platforms are ideal for implementing this class of AI features, and we at Ambiq are dedicated to making implementation as easy as possible by offering open-source developer-centric toolkits, software libraries, and reference models to accelerate AI feature development.

NEURALSPOT - BECAUSE AI IS HARD ENOUGH

neuralSPOT is an AI developer-focused SDK in the true sense of the word: it includes everything you need to get your AI model onto Ambiq’s platform. You’ll find libraries for talking to sensors, managing SoC peripherals, and controlling power and memory configurations, along with tools for easily debugging your model from your laptop or PC, and examples that tie it all together.

Facebook | Linkedin | Twitter | YouTube

Josh Saviano Then & Now!

Josh Saviano Then & Now! Danica McKellar Then & Now!

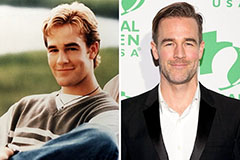

Danica McKellar Then & Now! James Van Der Beek Then & Now!

James Van Der Beek Then & Now! Kelly Le Brock Then & Now!

Kelly Le Brock Then & Now! Marcus Jordan Then & Now!

Marcus Jordan Then & Now!